Soundcheck is often where the biggest misunderstandings between musicians and sound engineers happen. Performers are focused on how music feels on stage, while engineers are focused on how it translates to an audience. In the middle of this difference, one request appears again and again: “Can I get more volume?”

The truth is that volume is rarely the real solution. Most sound issues come from tone, balance, dynamics, or monitoring rather than loudness itself. Understanding a few technical terms can completely change how musicians communicate during soundcheck, turning a stressful process into a collaborative one. Here are few words that can fix soundcheck faster than simply asking for more volume.

1.EQ

EQ, or equalization, is the process of adjusting different frequency ranges within a sound. Every instrument occupies its own frequency space, and when too many sounds overlap in the same range, the result feels muddy or unclear. Engineers use EQ to carve space so each instrument can be heard distinctly without increasing overall loudness.

For example, vocals may not need to be louder if guitars are reduced slightly in the same frequency range. A small EQ adjustment can suddenly make something sound clearer and more present. Understanding EQ helps musicians realize that clarity often comes from shaping tone rather than increasing volume.

2. GAIN

Gain controls how strong a signal is when it first enters the sound system. It is different from volume because it affects the quality of the signal before amplification happens. Proper gain staging ensures that sound remains clean and free from distortion.

If gain is set too high, even moderate volume levels can cause clipping or feedback. Engineers carefully adjust gain during soundcheck so the system has enough signal strength without overload. Consistent playing during this stage helps engineers build a stable foundation for the entire performance.

3. MONITOR MIX

The monitor mix is the sound musicians hear on stage through wedges or in ear monitors. This mix is personalized for performers and is completely separate from what the audience hears through the main speakers. Many soundcheck frustrations come from confusing these two listening environments.

A drummer may need more bass guitar in their monitor, while a vocalist may prioritize keys or harmonies. Asking specifically for monitor adjustments allows engineers to tailor individual listening experiences without affecting the audience mix.

4. FRONT OF HOUSE

Front of house, commonly called FOH, refers to the sound heard by the audience. Engineers positioned at the FOH console balance instruments so listeners experience a cohesive and clear mix across the venue.

What feels quiet on stage may already be perfectly balanced in the audience area. Engineers must think about how sound travels through space, reflections from walls, and crowd absorption. Understanding FOH helps musicians trust that stage perception and audience perception are often very different.

5. COMPRESSION

Compression controls dynamics by reducing the difference between loud and soft sounds. Instead of making something louder, compression makes it more consistent. This allows vocals, drums, or instruments to remain audible even during busy musical moments.

Punch and presence often come from controlled dynamics rather than increased level. When engineers apply compression, they are shaping how sound behaves over time, helping performances feel tighter and more polished without overwhelming the mix.

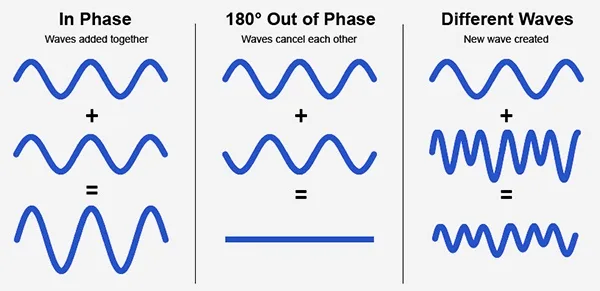

6. PHASE

Phase refers to the timing relationship between sound waves reaching microphones or speakers. Sound travels in waves, and when two microphones capture the same source at slightly different times, those waves may either reinforce each other or partially cancel each other out. When cancellation happens, certain frequencies disappear, making the sound feel thin, hollow, or lacking power even though the volume level has not changed.

This situation commonly occurs in live and studio environments where multiple microphones are used. A drum kit is a classic example. The snare drum might be captured by a close microphone, overhead microphones, and even nearby tom microphones simultaneously. Because each microphone sits at a different distance from the source, sound reaches them milliseconds apart. If these signals are not aligned properly, they interfere with each other, causing loss of low end or impact. Musicians often interpret this as weak sound, when the real issue is phase interaction rather than loudness.

Engineers correct phase problems through careful microphone placement, adjusting polarity, or time alignment within the mixing system. Sometimes moving a microphone just a few centimeters can dramatically improve fullness and clarity. Understanding phase helps musicians realize why engineers spend time positioning microphones during soundcheck instead of immediately adjusting levels. A well aligned signal feels stronger, clearer, and more natural without needing additional volume or processing.

7. FEEDBACK

Feedback occurs when a microphone picks up sound from a speaker and continuously re amplifies it, creating a ringing or squealing noise. It is one of the most common challenges during live performances.

Feedback is usually caused by microphone placement, excessive gain, or overlapping frequencies. Engineers often solve it through subtle EQ cuts or repositioning monitors rather than lowering overall volume. Small physical adjustments on stage can make a significant difference.

8. REVERB

Reverb, short for reverberation, is the persistence of sound after it is produced, created by reflections bouncing off surfaces in a space. In real life, when you clap in a large hall, the sound does not stop instantly. It continues briefly as reflections reach your ears at slightly different times. Reverb in audio systems recreates this natural phenomenon electronically, simulating how sound behaves in physical environments.

During soundcheck, musicians often request more reverb because it feels fuller or more comfortable while performing. However, excessive reverb can blur lyrics and reduce clarity for the audience, especially in live venues that already have natural reflections. Engineers therefore balance reverb carefully so the performance feels spacious without losing definition or intelligibility.

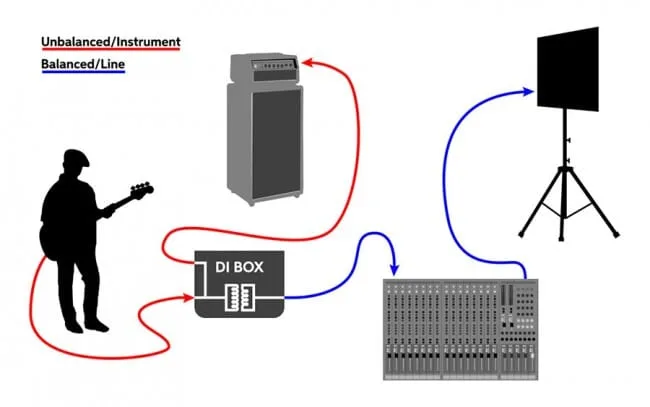

9. DI BOX

A DI box, or direct injection box, allows instruments like keyboards, bass guitars, and electronic instruments to connect directly into the sound system. This reduces noise and preserves signal quality over long cable distances.

Using a DI ensures a cleaner and more consistent sound compared to microphones alone. When engineers request a DI connection, they are improving clarity and reliability rather than complicating the setup process.

10. HEADROOM

Headroom refers to the space between normal signal levels and the point where distortion begins. It acts as a safety margin that allows performances to become louder or more energetic without damaging sound quality. Live performances are unpredictable, and musicians naturally play louder once adrenaline kicks in. Engineers create headroom during soundcheck so the system can handle these dynamic moments without clipping or distortion.

11. LATENCY

Latency is the small delay between producing a sound and hearing it through speakers or monitors. In digital systems, sound must be processed before playback, which can create noticeable delay if not managed properly. Even a slight latency can make performers feel disconnected from their instruments or vocals. Engineers minimize latency through system optimization and routing decisions so musicians experience natural timing while performing.

12. LINE CHECK

A line check happens before soundcheck and focuses only on verifying that every microphone and instrument is working properly. Engineers confirm that signals are reaching the console correctly without worrying about tone or balance yet. Unlike soundcheck, a line check is purely technical. Understanding this distinction helps musicians know why engineers sometimes ask for quick signals rather than full performances early in setup.

13. HIGH PASS FILTER

A high pass filter removes unnecessary low frequencies from a signal. Many instruments and vocals produce low rumble that does not contribute musically but makes mixes sound muddy. By filtering these frequencies, engineers create clarity and leave space for instruments that actually need low end, such as kick drum or bass guitar.

14. PATCH

Patching refers to connecting equipment through cables or routing signals within the console. Engineers may say they are “patching” an instrument, meaning they are assigning its signal to the correct input or output path. This step ensures every sound source reaches the intended speakers or monitors.

15. AUX SENDS

An aux send is a routing control that sends part of an audio signal to another destination, most commonly monitor mixes or effects processors. Each performer’s monitor mix is built using separate aux sends. This explains why different musicians hear different things on stage. Adjusting one performer’s monitor mix does not change what the audience hears.

16. SUBWOOFER

Subwoofers are specialized speakers designed to reproduce very low frequencies that standard speakers cannot handle effectively. They add depth and physical energy to live sound. Because low frequencies travel farther and accumulate easily, engineers carefully control subwoofer levels to avoid overwhelming the mix or causing vibrations that reduce clarity.

17. GAIN STAGING

Gain staging refers to managing signal levels at every step of the audio path, from the instrument or microphone all the way to the speakers. Instead of relying on one control to fix loudness, engineers ensure that each stage of the system receives a clean and healthy signal level. Poor gain staging can introduce noise, distortion, or weak sound even if the final volume seems correct. During soundcheck, engineers carefully adjust input gain, channel levels, and output levels so the system works efficiently. For musicians, consistent playing dynamics help engineers set proper gain staging, which ultimately results in clearer sound.

18. NOISE FLOOR

The noise floor is the constant background noise present in any audio system, caused by electronics and environmental interference. A clean mix keeps musical signals well above this noise level. Proper gain staging and quality connections help minimize noise, ensuring performances sound clear rather than hissy or dull.

19. CLIPPING

Clipping happens when an audio signal exceeds the system’s maximum capacity, causing harsh distortion. Unlike intentional musical distortion, clipping sounds unpleasant and can damage equipment. Engineers maintain safe levels and headroom during soundcheck to prevent clipping when performers naturally become louder during the show.

20. DRY AND WET SIGNAL

A dry signal is sound in its original form without effects, while a wet signal includes added processing such as reverb or delay. Engineers usually start with a dry signal during soundcheck to check clarity and tone before adding effects. The balance between dry and wet sound controls how close or spacious a performance feels. Too much wet signal can reduce clarity, so engineers blend both carefully to keep the sound expressive while remaining clear for the audience.

Soundcheck is not a rehearsal but a technical preparation. Playing at realistic performance levels helps engineers balance sound accurately. Random testing or sudden volume changes make it difficult to build a stable mix. Clear communication and consistent playing allow engineers to focus on refining sound instead of troubleshooting avoidable problems.

Understanding these terms does not mean musicians need technical expertise. It simply builds a shared language between performers and engineers. When communication improves, soundchecks become faster, performances feel better on stage, and audiences ultimately hear music the way it was meant to sound. Sometimes the fastest way to fix soundcheck is not more volume, but better vocabulary.